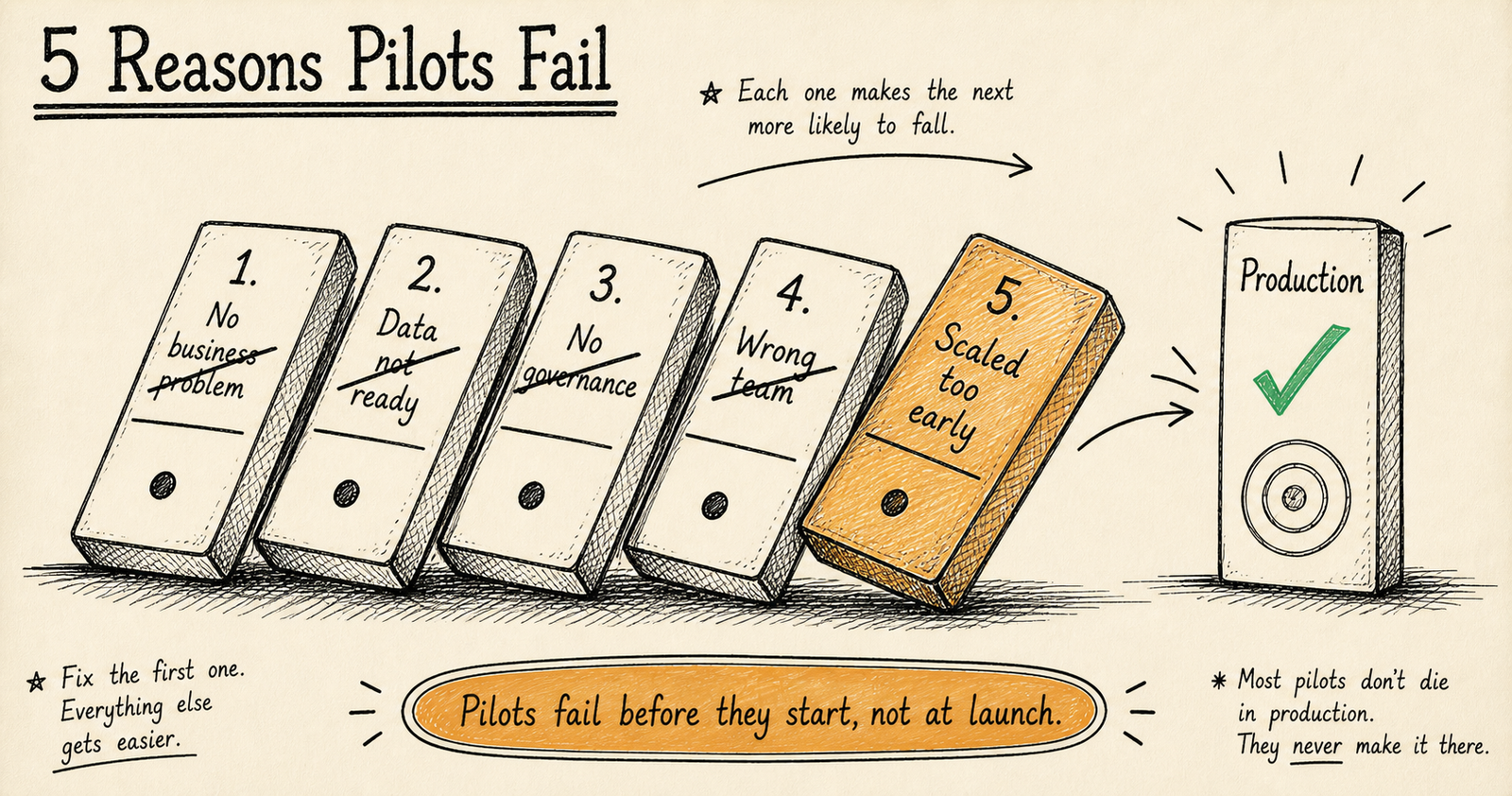

Why do AI projects fail? 87% of AI projects never reach production because of five recurring mistakes: unclear business objectives, poor data quality, no governance framework, wrong team structure, and scaling too fast. That number — from Gartner’s ongoing research into enterprise AI adoption — hasn’t improved much since 2020. If you’re planning an AI investment, understanding these failure modes is the single most important step you can take to protect your budget and timeline.

I’ve spent 19 years building software systems, and the last several focused specifically on AI implementation. I’ve seen projects fail for all five of these reasons — including some I was brought in to rescue. Here’s what actually goes wrong, and more importantly, how to prevent each one.

Reason 1 — The Business Problem Was Never Actually Defined

This is the most common and most expensive mistake. A leadership team reads about AI, gets excited, and tells their team: “We need to implement AI.” That’s not a business objective. That’s a technology preference.

Without a specific, measurable outcome, the project drifts. Engineers build technically impressive demos that solve no real problem. Stakeholders keep changing direction because there was never a fixed target. Three months and £80,000 later, the pilot gets quietly shelved.

“We want to use AI to improve our operations.”

“We want to reduce document processing time from 4 hours per batch to 90 minutes, with 95% accuracy, within 8 weeks.”

The specific version gives you three things the vague one doesn’t: a measurable target (time reduction), a quality bar (95% accuracy), and a deadline (8 weeks). You can evaluate whether the AI project succeeded or failed. With the vague version, you can’t — which is why those projects quietly die without anyone formally calling them a failure.

How to fix it

- Start with a specific process you want to improve — not a technology you want to adopt

- Define success metrics before writing a single line of code

- Get sign-off from whoever controls the budget on what “done” looks like

- If you can’t articulate the business value in one sentence, you’re not ready

Reason 2 — The Data Wasn’t Ready

AI is only as good as the data it learns from. This isn’t a cliche — it’s a budget reality. Most companies dramatically underestimate how much effort goes into data preparation. Industry benchmarks consistently show that 40–60% of total AI project time is spent on data cleaning, normalisation, and pipeline engineering. Not model building. Not fine-tuning. Data plumbing.

Here’s what “data not ready” actually looks like in practice:

- Inconsistent formats: Customer records in three different systems with three different schema conventions

- Missing values: 30% of critical fields are blank, making the dataset unreliable for training

- No historical depth: You need 18 months of data for a forecasting model, but only have 4 months of clean records

- Access restrictions: The data exists, but legal or compliance constraints prevent its use for training

- Bias in the source: The training data reflects historical patterns you actually want to correct, not replicate

I worked on a document processing project where the client assumed their PDF archives were ready for extraction. They weren’t. Half the documents were scanned images with no OCR layer, and the other half had inconsistent layouts across three years of template changes. We spent four weeks on data preparation before the AI component even started. That’s normal — but it wasn’t in the original timeline.

How to fix it

- Run a data audit before commissioning the AI project — not during it

- Budget 40–60% of project time for data preparation

- Check data volume, quality, format consistency, and access rights upfront

- If the data isn’t there, the honest answer is to fix the data problem first and build AI later

Reason 3 — Governance Was Bolted On After the Fact

This is the failure mode I care about most, because it’s the one with the longest tail of damage. Teams build a working AI model, start getting results, and then someone from compliance asks: “Wait — can we actually use customer data this way?”

The answer is usually no. And retrofitting governance into a system that wasn’t designed for it is brutally expensive.

In regulated industries — financial services under FCA rules, healthcare under HIPAA, legal services handling privileged data — governance isn’t optional. It’s not something you add in the last sprint. Compliance requirements must be design constraints from day one. That means audit trails, data lineage, explainability, access controls, and retention policies are part of the architecture, not a layer on top of it.

Governance-first AI is our core thesis. Every system we build starts with compliance mapping and data governance rules before any model architecture is chosen. It’s slower upfront. It saves months of rework later. We’ve written more about this approach in our services overview.

How to fix it

- Involve compliance and legal from the project kickoff — not the final review

- Map regulatory requirements to technical constraints before choosing your approach

- Design audit trails and explainability into the system architecture

- Document data flows, access permissions, and retention rules as part of the technical spec

- If your AI partner doesn’t ask about governance in the first meeting, that’s a red flag

Find out if your data and team are ready

Our AI Readiness Assessment identifies gaps in data, governance, and team structure before you invest in a pilot.

Get a Custom Estimate →Reason 4 — The Team Structure Was Wrong

A common pattern: the company hires a machine learning engineer (or contracts an AI vendor) and expects them to deliver a production system. The ML engineer builds a model with 94% accuracy in a Jupyter notebook. Six months later, it’s still in a Jupyter notebook because nobody planned for integration, deployment, monitoring, or end-user workflows.

Successful AI projects need three perspectives from the start:

Pure technology teams build technically impressive systems that nobody uses. Pure business teams write requirements that aren’t technically feasible. You need both perspectives collaborating from the beginning, not handing off between phases.

I’m a solo founder, which means I handle domain analysis, AI engineering, and deployment myself. This works well for focused projects where one person can hold the full context — document processing, lead scoring, workflow automation. It means I’m selective about what I take on. I won’t accept a project that genuinely needs a 10-person team, because I can’t be a 10-person team. That honesty saves both of us time and money.

How to fix it

- Ensure domain, engineering, and operations perspectives are present from kickoff

- Don’t isolate the AI work from the people who will actually use the output

- Plan for deployment and adoption from day one — not as a Phase 2 afterthought

- If your vendor or team can’t explain how the model gets into production, ask harder questions

Reason 5 — They Tried to Scale Before Proving Value

The boardroom version: “Let’s build an enterprise-wide AI platform.” Six months and £300,000 later, the platform exists but nobody uses it because it wasn’t validated against a real business need first.

The pattern that works is boring and sequential:

- Pick one process in one department with one measurable outcome

- Build a focused pilot — not a platform — to prove it works

- Validate the ROI with real numbers, not projections

- Then invest in scaling what you’ve proven

This is exactly why we designed the 21-day AI pilot: a contained engagement with a defined scope, a working proof of concept, and measurable results. If the pilot succeeds, you have evidence to justify a larger investment. If it doesn’t, you’ve spent weeks and a modest budget instead of months and a fortune.

Enterprise AI platform projects have a failure rate above 90%. Focused pilots with a single measurable objective succeed roughly 60–70% of the time. The difference isn’t technology — it’s scope discipline.

How to fix it

- Resist the urge to build a platform — build a pilot instead

- Define the smallest possible scope that still proves business value

- Set a hard time limit (21 days is a good boundary for most pilots)

- Only scale what’s been validated with real-world data and real users

How to De-Risk Your AI Investment

If you’re evaluating an AI project — whether you build it in-house, hire an agency, or work with a specialist like KORIX — use this checklist to protect your investment:

- Business outcome defined: Can you state the target improvement in one sentence?

- Data audit complete: Do you know the volume, quality, and accessibility of your data?

- Governance mapped: Are compliance requirements documented as technical constraints?

- Team roles clear: Do you have domain, engineering, and operations covered?

- Scope contained: Are you starting with one process, one department, one measurable outcome?

- Budget realistic: Have you allocated 40–60% of time for data preparation?

- Success criteria agreed: Does everyone involved know what “done” looks like?

- Iteration planned: Is there budget for at least 2–3 rounds of refinement after the initial build?

If you can check all eight boxes, your project has a significantly higher chance of reaching production. If you can’t, the gap tells you exactly where to focus before committing budget.

The best AI investment you can make is the one that starts with honest preparation. The worst is the one that starts with excitement and skips the hard questions.

87% of AI projects fail — but the reasons are predictable and preventable.

Define a specific business outcome. Audit your data. Build governance in from day one. Get the right team structure. Start with a pilot, not a platform. These five steps won’t guarantee success, but they’ll put you in the 13% that reaches production.

Recommended Reading

What percentage of AI projects fail?

87% of AI projects never reach production, according to Gartner’s ongoing research. The primary reasons are unclear objectives, poor data quality, missing governance, wrong team structure, and premature scaling.

How much should I budget for data preparation?

Budget 40–60% of total AI project time for data cleaning, normalisation, and pipeline engineering. This is not overhead — it’s the foundation. A model trained on messy data produces messy results. Read more about AI cost breakdowns.

What is the best way to start an AI project?

Start with a focused pilot targeting one process, one department, one measurable outcome. Our 21-day AI pilot validates whether AI can solve your specific problem before committing to a full build.

Why is AI governance important?

Governance ensures compliance with regulations (FCA, HIPAA, GDPR), provides audit trails and explainability, and prevents costly retrofitting. It must be a design constraint from day one, not an afterthought. Learn about our governance-first approach.

Should I build an AI platform or start with a pilot?

Always start with a pilot. Enterprise AI platform projects have a failure rate above 90%. Focused pilots with a single measurable objective succeed roughly 60–70% of the time. The difference is scope discipline, not technology.