Governed AI implementation does not have to take six months. When governance constraints become the first design input — not a final-stage checkpoint — service businesses can deploy compliant, production-ready AI systems in weeks.

Most teams learn this the hard way. They build an AI workflow, get excited about early results, then hand it to legal or IT security for review. What follows is a familiar sequence: a list of questions nobody anticipated, a round of redesign, another review, and a launch date that keeps moving. By the time the system ships, the team that built it has lost momentum, and the business case has gone cold.

There is a better sequence. This article explains it — including the named framework KORIX uses to help UK and US service businesses deploy governed AI without that delay.

If you are still deciding whether your business is ready for this, the AI readiness assessment takes two minutes and tells you where you actually stand.

Why Governance Breaks Most AI Rollouts

The standard playbook looks like this: select a tool, build a workflow, test it internally, then submit it for security and compliance review. Governance lives at the end of the process — a gate to pass through before go-live.

The problem is structural. When you design a system without governance inputs, you make dozens of micro-decisions that each carry compliance implications: where data is stored, which model processes it, what gets logged, who can override an output. By the time security reviews the system, those decisions are baked into the architecture. Changing them is expensive, slow, and demoralising.

The common assumption is that governance review catches problems before launch. In practice, it creates them — because it forces redesigns on systems that were never built with compliance constraints in mind. Governance is not a test you pass. It is a set of requirements you design to.

McKinsey's State of AI 2024 report found that organisations reporting the highest AI adoption rates are significantly more likely to have formal AI governance processes in place before deployment begins — not after. The direction of causality matters: governance enables adoption, it does not restrict it.

Gartner's research consistently shows that AI projects fail at the governance stage more often than at the technical stage. The systems work. They just cannot get through the approval process because nobody thought about the approval process when they were designing the systems.

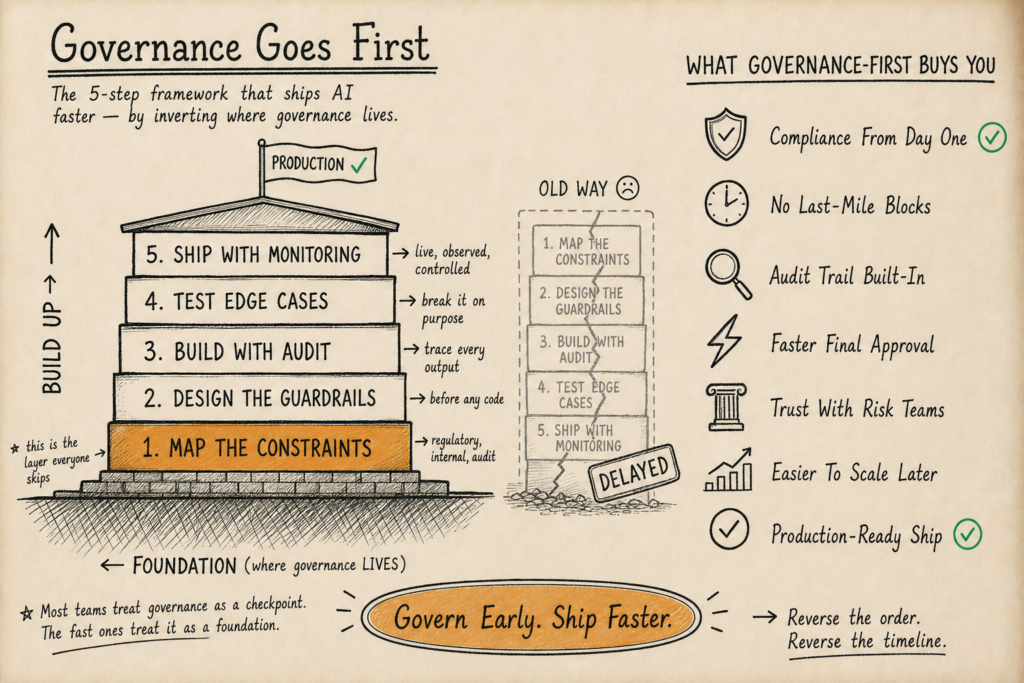

This is the problem the Governance-First Deployment Framework is built to solve.

The Governance-First Flip: Why Sequence Changes Everything

The insight is simple but non-obvious: governance constraints are just requirements. And requirements belong at the start of a project, not the end.

When you know upfront that your system cannot store personally identifiable data outside a specific jurisdiction, that constraint shapes your architecture from day one. You do not build the wrong thing and then fix it. You build the right thing first.

"AI is the new electricity. Just as electricity transformed almost everything a hundred years ago, today I actually have a hard time thinking of an industry that I don't think AI will transform in the next several years." — Andrew Ng, Founder of DeepLearning.AI. Source

That transformation only compounds when businesses can actually deploy the systems they build — which requires governance to be a design primitive, not an afterthought.

The Governance-First Flip reframes the question your team asks at the start of every AI project. Instead of "what do we want this system to do?", the first question becomes "what constraints must this system operate within?" The answer to the second question defines the architecture. The answer to the first question defines the features. In that order.

This is not about slowing down. It is about removing the costly redesign loop that slows down every team that skips it.

For a broader grounding in what governed AI actually means, what is governed AI covers the principles behind this approach.

The Governance-First Deployment Framework (5 Steps)

This is the framework KORIX uses across client deployments. It is designed for service businesses with 20–150 staff — organisations with real compliance exposure but without a dedicated AI governance team.

Step 1: Constraint Mapping Before Scope Definition

Before writing a single line of a project brief, document the non-negotiables. These fall into four categories:

- Data residency — where is the data processed and stored? Does GDPR, UK GDPR, or CCPA apply?

- Model access — which models are approved? Are there restrictions on sending client data to third-party APIs?

- Audit requirements — does the output need to be explainable, logged, or reversible?

- Human oversight thresholds — at what decision point must a human be in the loop?

This mapping session typically takes two to four hours. It produces a one-page constraints document that becomes the first input to architecture decisions. Every subsequent design choice is evaluated against it.

Most businesses have never produced this document. When they do, the number of surprises during security review drops to near zero.

Step 2: Architecture Selection Against Constraints

With the constraints document in hand, you select your architecture — not your tooling. Architecture selection means deciding: where does inference happen, how does data flow, what gets persisted, and who controls access?

This is where the build vs buy decision becomes concrete. Off-the-shelf AI platforms are fast to deploy but often fail constraint mapping — particularly around data residency and audit logging. Custom-built systems take longer upfront but clear the governance review in a fraction of the time.

Most teams treat architecture as a technical choice and governance as a compliance choice. They are the same choice. When you decide that inference happens inside your existing Microsoft 365 environment rather than on a third-party platform, you have simultaneously made a technical decision and a governance decision. Separating them creates the redesign loop. Unifying them eliminates it.

KORIX's Bring Your Own Stack philosophy is built on this principle. By deploying AI agents inside the client's existing software stack — rather than introducing a new platform — the data residency, access control, and audit trail questions are often answered by the existing IT policy rather than requiring new governance frameworks.

Step 3: A Pilot Scoped to Governance Boundaries

The pilot is not a proof of concept for the technology. It is a proof of concept for the governance model.

This distinction matters. A technology POC asks: "can AI do this task?" A governance POC asks: "can AI do this task within our compliance constraints, and can we demonstrate that it did?"

The scope of the pilot is deliberately narrow — one workflow, one data type, one department. The KORIX 21-Day AI Pilot is structured this way: a single, bounded use case that ships to production within 21 days, operating entirely within the governance constraints identified in Step 1.

Narrow scope means fewer variables. Fewer variables mean a cleaner audit trail. A clean audit trail means the security review is a formality, not a blocker.

For teams evaluating whether a 21-day timeline is realistic for their situation, 7 signs your business is ready for AI provides an honest checklist.

Step 4: Compliance-Integrated Testing

Standard QA testing checks whether a system does what it is supposed to do. Compliance-integrated testing checks whether the system does what it is supposed to do and leaves the right evidence that it did.

This means adding three testing layers on top of standard functional tests:

- Data flow verification — confirm that data is processed only in approved locations and that no unapproved external calls are made.

- Audit log review — verify that every significant action is logged in a format that satisfies your audit requirements.

- Override testing — confirm that human override mechanisms work correctly, that they are accessible to the right people, and that the override action is itself logged.

These tests take a few hours to run. They produce documentation that makes security review straightforward. Skipping them is the single fastest way to extend your security review from two weeks to eight.

For service businesses processing client documents, best document processing tools covers the compliance considerations specific to document-heavy workflows.

Step 5: Staged Rollout with Governance Checkpoints

The final step is a staged rollout — not a big-bang launch. The stages are:

- Shadow mode — the AI system runs in parallel with the existing process, generating outputs that are reviewed but not acted on. This stage validates accuracy without compliance exposure.

- Assisted mode — staff receive AI-generated recommendations and approve or reject them. The human is still the decision-maker. This stage builds trust and surfaces edge cases.

- Autonomous mode — the system operates without per-action human approval, within the boundaries defined in Step 1. A human reviews aggregate outputs and exceptions.

Each transition between stages is a governance checkpoint. Moving from shadow to assisted requires sign-off from the process owner. Moving from assisted to autonomous requires sign-off from the compliance lead. The checkpoints are documented, and the documentation becomes part of the audit trail.

This staged approach is what allows service businesses to operate under real compliance constraints — UK GDPR, FCA guidelines, SRA requirements, HIPAA — without pausing their AI rollout for a six-month review cycle.

What This Looks Like in Practice

The clearest verified example in KORIX's portfolio is Anna Mazurowska's business, Numerology-Matrix. Before the AI system was built, each client reading took three to four hours of manual research, synthesis, formatting, and delivery. The process was accurate but unscalable — Anna was the bottleneck in her own business.

The KORIX system reduced that to eight minutes per reading. A 95% reduction in time per client. An 8x increase in capacity. Those numbers are verified via Anna's published Clutch review.

What made the deployment fast was not cutting corners on quality. It was building with a clear constraints map from day one: Anna's client data had specific handling requirements, the outputs needed to be reviewable and correctable, and the system had to work within her existing tooling rather than requiring a new platform. Those constraints, defined upfront, shaped every architecture decision. The result was a system that went from brief to production in four months — with no post-launch governance surprises.

"We've always believed that the responsible development of AI is not at odds with the rapid development of AI. You can do both at the same time if you build the right foundations." — Dario Amodei, CEO of Anthropic. Source

The Governance-First Framework is what "the right foundations" looks like in practice for a service business.

How Deployment Approaches Compare

Not every approach to governed AI implementation is equal. The table below compares five common deployment models across four dimensions relevant to service businesses with 20–150 staff.

| Deployment Approach | Time to Production | Governance Integration | Ownership Model | Compliance Risk at Launch |

|---|---|---|---|---|

| Off-the-shelf SaaS AI tool | 1–4 weeks | Minimal — platform-defined | Licensed, not owned | High — data residency often unclear |

| No-code AI platform | 2–8 weeks | Partial — dependent on platform policy | Licensed, not owned | Medium — audit logging often limited |

| Consultancy-led platform migration | 6–18 months | Retrofitted — governance added post-build | Platform-dependent | Medium after review, high during build |

| Custom build without governance framework | 8–16 weeks | Retrofitted — security review at end | Owned | High — redesign loop likely |

| Governance-First custom deployment (KORIX model) | 3–8 weeks | Native — constraints define architecture | Owned, source code delivered | Low — compliance-integrated from day one |

No-code AI platforms are the fastest to deploy but carry the highest residual compliance risk for businesses with regulated data. The Governance-First model takes slightly longer than a no-code tool but ships with significantly lower post-launch exposure — and without the recurring seat license cost.

For a detailed breakdown of what each model typically costs, what custom AI implementation costs in 2026 covers the numbers honestly.

Want a Realistic Plan for Your Project?

No sales pitch. We will give you an honest read on what your situation actually needs, what it should cost, and whether AI is even the right tool here.

Book a Discovery Call →The Mistakes That Still Happen

Even with a clear framework, three failure patterns repeat across service business AI deployments.

Mistake 1: Treating the constraints document as a one-time exercise. Governance constraints change. Regulations update. Your data handling practices evolve. The constraints document should be a living input that is reviewed at the start of each new AI project — not a document that sits in a folder after the first session.

Mistake 2: Scoping the pilot too broadly. The temptation is to prove as much value as possible in the pilot. The risk is that a broad pilot touches multiple data types, multiple departments, and multiple compliance domains simultaneously. A narrow pilot that clears governance review cleanly is worth more than a broad pilot that stalls in review for three months.

Mistake 3: Skipping the assisted mode stage. Teams that move directly from shadow mode to autonomous mode skip the stage where staff build genuine trust in the system's outputs. The result is either over-reliance (staff stop reviewing outputs that need human judgment) or under-adoption (staff distrust the system and route around it). Assisted mode is where trust is earned.

Every week a team delays formalising their governance constraints, they are making undocumented architecture decisions that will need to be unwound. This is not a philosophical risk — it is a practical one. The cost of retrofitting governance into a deployed system is typically 40–60% of the original build cost, based on patterns observed across software delivery projects. Build the constraints document first.

Stanford HAI's AI Index reports consistent findings that AI deployment failures in enterprise settings are more often attributable to governance and process gaps than to technical performance gaps. The technology works. The surrounding structure often does not. The common AI failure modes article covers the specific patterns in more detail.

Choosing the Right Partner for Governed Deployment

The framework is only as good as the team implementing it. A partner who treats governance as a checkbox will still retrofit it. Look for three things when evaluating an implementation partner for governed AI deployment.

First: do they ask about constraints before they ask about features? A partner who leads with "what do you want the AI to do?" before understanding your data environment, regulatory exposure, and audit requirements is building without a foundation. The how to evaluate an AI partner guide has a full checklist for this evaluation.

Second: do they offer ownership? Governed AI systems need to be auditable, modifiable, and controllable — long after the implementation partner has left. If you cannot access the source code, you cannot meaningfully govern the system.

Third: can they show you a real deployment timeline? Not an estimated timeline built on assumptions. A real one, with milestones and a production guarantee. KORIX's 21-Day AI Pilot is structured as a production guarantee — if you do not have a production AI system on Day 22, you do not pay the second invoice.

Deloitte's research on enterprise AI adoption consistently identifies "lack of trust in AI outputs" as a top barrier to scaling — and that distrust typically originates from deployments that lacked auditable, explainable outputs from the start. The Governance-First approach directly addresses this: when every output is logged and every decision point is documented, the trust problem becomes tractable.

For teams evaluating multiple options, the build vs buy decision framework provides a structured comparison, and measuring AI ROI explains how to quantify the returns from governed deployment against your baseline.

The AI agent library shows the specific agents KORIX deploys within client stacks — each one built with the Governance-First Framework as standard.

The Framework in One Place

The Governance-First Deployment Framework, summarised:

- Constraint Mapping — document data residency, model access, audit requirements, and human oversight thresholds before writing a project brief.

- Architecture Selection Against Constraints — choose your architecture based on what the constraints allow, not what the technology makes easy.

- Governance-Scoped Pilot — run a narrow, bounded pilot that proves the governance model works, not just the technology.

- Compliance-Integrated Testing — add data flow verification, audit log review, and override testing on top of standard functional QA.

- Staged Rollout with Governance Checkpoints — move through shadow mode, assisted mode, and autonomous mode with documented sign-off at each transition.

This sequence does not slow down deployment. It removes the redesign loop that slows down every team that skips it.

IBM's Institute for Business Value has found that organisations with formal AI governance frameworks report higher AI ROI and faster time-to-value than those without — precisely because governed systems clear the approval process and reach production, rather than stalling in review. The governance investment pays for itself in deployment speed.

The Governance-First Deployment Framework's 5 steps eliminate the redesign loop that turns 6-month security reviews into 3-to-8-week production deployments for service businesses.

Governed AI implementation fails when governance is treated as a final-stage checkpoint rather than a first design input. The Governance-First Deployment Framework inverts this sequence: constraints are mapped before scope is defined, architecture is selected against those constraints, and compliance is tested as a native part of QA — not an afterthought. The result is AI systems that clear security and compliance review quickly because they were built to pass it from day one. For service businesses with 20–150 staff, this approach makes the difference between a deployment that ships in weeks and one that stalls indefinitely.

Continue learning —

go deeper.

What is governed AI implementation?

Governed AI implementation means deploying AI systems with documented controls around data handling, model access, audit logging, and human oversight — built into the architecture from day one rather than added after the fact. For service businesses, this typically covers GDPR or UK GDPR compliance, audit trail requirements, and human override mechanisms. The key distinction from standard AI deployment is that governance constraints are treated as design inputs, not final-stage checkpoints. This prevents the costly redesign loops that delay most AI rollouts and ensures the system can survive a security or compliance review without being rebuilt. See [what is governed AI](/learning-center/what-is-governed-ai/) for a full explainer.

How long does governed AI implementation actually take?

For a service business with 20–150 staff, a well-scoped governed AI deployment — using the Governance-First Framework — typically takes three to eight weeks from brief to production. The critical variable is scope. A narrow, single-workflow pilot with a clear constraints document can reach production in 21 days. A broader deployment touching multiple departments or data types takes longer, primarily because the constraint mapping and compliance-integrated testing phases scale with complexity. The six-month timelines most teams experience are a product of retrofitting governance onto systems that were built without it — not an inherent property of governed deployment.

What is the Governance-First Deployment Framework?

It is a five-step sequence developed by KORIX for deploying AI in service businesses with real compliance exposure. The steps are: (1) Constraint Mapping before scope definition, (2) Architecture Selection against those constraints, (3) a governance-scoped pilot, (4) compliance-integrated testing, and (5) a staged rollout with documented checkpoints. The framework flips the conventional sequence — governance becomes the first design input, not the last gate. The result is a system that clears security and compliance review quickly because it was built to pass that review from the start.

Does governed AI implementation cost more than standard AI deployment?

Upfront, the constraint mapping and compliance-integrated testing phases add time — typically a few days on a 21-day pilot. Over the full project lifecycle, governed deployment almost always costs less, because it eliminates the redesign loop. Retrofitting governance into a deployed system typically costs 40–60% of the original build cost. The governance investment in the early stages is significantly cheaper than the redesign cost it prevents. [What custom AI costs in 2026](/learning-center/ai-implementation-cost/) covers the full cost breakdown.

Can small service businesses (under 50 staff) realistically implement governed AI?

Yes — and the Governance-First Framework is specifically designed for businesses in the 20–150 staff range that have real compliance exposure but no dedicated AI governance team. The constraint mapping session is a two-to-four hour exercise, not a multi-month programme. The key is scoping the first deployment narrowly: one workflow, one data type, one department. A narrow governed deployment that works is worth more than a broad ungoverned deployment that stalls. The [AI readiness assessment](/learning-center/ai-readiness-assessment/) will tell you in two minutes whether your business has the foundations in place.

What compliance frameworks does governed AI implementation need to address?

For UK service businesses, the primary frameworks are UK GDPR (data residency, lawful basis, data subject rights), sector-specific requirements (FCA guidelines for financial services, SRA requirements for legal, CQC standards for health and social care), and internal audit requirements. For US businesses, the relevant frameworks depend on sector and state: HIPAA for health data, SOC 2 for technology businesses handling client data, and CCPA for businesses with California-resident clients. The constraint mapping session in Step 1 of the Governance-First Framework is designed to surface the applicable requirements for your specific context before any architecture decisions are made.

How do I know if my AI partner is taking governance seriously?

Three signals. First: do they ask about your data environment and compliance constraints before they ask about features? A partner who leads with capabilities rather than constraints is building without a governance foundation. Second: do they deliver source code ownership? You cannot govern a system you cannot inspect or modify. Third: can they show you how governance checkpoints are embedded in their deployment timeline — not added at the end? The [how to evaluate an AI partner](/learning-center/how-to-evaluate-ai-partner/) guide has a full evaluation checklist.