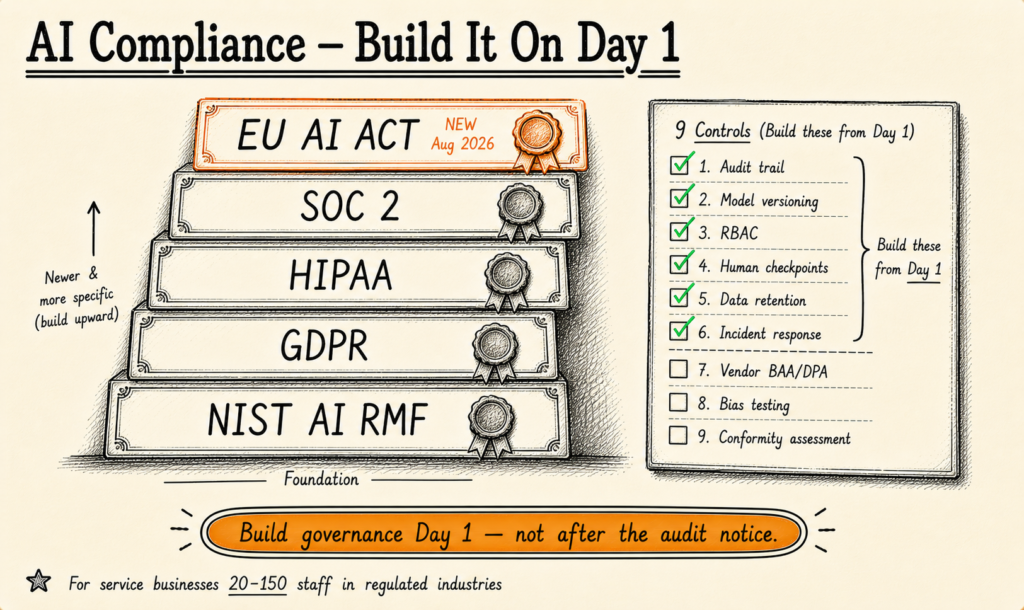

AI compliance in 2026 is governed by five frameworks: SOC 2 (the de facto B2B requirement), GDPR (with AI-specific fines reaching €345M), HIPAA (penalties up to $1.5M per violation), the EU AI Act (high-risk requirements active August 2026), and the NIST AI Risk Management Framework. Service businesses deploying AI must build governance from Day 1 — retrofitting after the audit notice typically costs 2-3× the original build.

I've spent 19 years building software systems, the last several focused specifically on AI implementation for service businesses with 20-150 staff. The single most expensive pattern I see in 2026 is firms shipping AI systems without governance — then discovering during their first compliance review that they cannot answer the question every regulator now asks: "Show me why the AI made this specific decision six months ago." Build the audit trail in from the start, or rebuild the system later. Those are the two paths.

This article is the 9-point compliance checklist we use at KORIX before any AI workflow goes to production. It covers the five frameworks, the controls each requires, what to ask vendors, and the architecture decisions that determine whether your AI deployment passes the first audit or fails it.

The Five Frameworks That Apply in 2026

Most service businesses are subject to at least three of these frameworks simultaneously. Understanding which applies to your AI workload is the prerequisite to building the right governance. According to BCG's 2026 AI Value Capture research, 73% of enterprise AI initiatives now name compliance posture as a top-three vendor selection criterion — up from 41% in 2024. Separately, Atlassian's 2026 State of Product survey found 46% of teams cite integration as the biggest barrier to scaling AI automation, but compliance gaps now rank as the second-largest blocker for regulated industries.

A third datapoint anchors the urgency: MIT NANDA's 2025 State of AI Report puts the production-success rate for AI projects at just 5% — and post-mortem analysis consistently identifies the absence of governance design from Day 1 as one of the most common failure modes. The vendors and buyers who win in 2026 are the ones who treat compliance as part of the engineering work rather than a remediation phase.

SOC 2 (Service Organization Control 2)

Who it applies to: Any B2B service business handling customer data. Not legally required, but the de facto contractual requirement for enterprise sales in 2026.

What it covers: Five trust service criteria — Security, Availability, Processing Integrity, Confidentiality, Privacy. SOC 2 Type II requires evidence over a 6-12 month observation period.

AI-specific implications: Audit trails for every AI decision. Access control on the AI system and its underlying data. Incident response procedures including AI-specific failure modes (model drift, hallucinations, training-data leakage).

Cost: $30,000-$70,000 for a mid-market service business audit, plus 6-9 months prep + ongoing remediation.

GDPR (General Data Protection Regulation)

Who it applies to: Any AI system processing personal data of EU residents — regardless of where your business is headquartered.

What it covers: Lawful basis for processing, data minimisation, right to access/erasure/portability/explanation, breach notification within 72 hours.

AI-specific implications: The "right to explanation" provision (Article 22) restricts solely-automated decision-making with significant effects on individuals — relevant to AI-driven hiring, credit scoring, and customer service automation. Cumulative GDPR fines for AI-related violations have reached approximately €345M as of 2026, per public Data Protection Authority enforcement data.

Cost: DPO + ongoing program typically $50,000-$150,000/year for service businesses with EU exposure.

HIPAA (Health Insurance Portability and Accountability Act)

Who it applies to: Any AI system processing Protected Health Information (PHI) in the U.S. — covered entities and their business associates.

What it covers: Privacy Rule (use and disclosure of PHI), Security Rule (technical safeguards including audit controls), Breach Notification Rule.

AI-specific implications: Audit trail for any AI access to PHI. De-identification controls if PHI is used for AI training. Business Associate Agreements (BAAs) for any AI vendor handling PHI. HHS OCR penalties run up to $1.5M per violation under tier-4 willful neglect.

Cost: $20,000-$50,000 initial program setup plus annual review.

EU AI Act

Who it applies to: Any AI serving EU users (employees, customers, partners). Globally extraterritorial — applies regardless of company location.

What it covers: Four risk tiers — prohibited, high-risk, limited-risk, minimal-risk. High-risk systems require risk management, data governance, technical documentation, record-keeping, transparency, human oversight, accuracy/robustness/cybersecurity, and conformity assessment.

AI-specific implications: The Act entered force on 1 August 2024, with high-risk system requirements taking effect 1 August 2026. Service businesses using AI in employment decisions, credit assessment, healthcare diagnostics, education, or critical infrastructure are likely high-risk and have less than 90 days from this article's publication to be conformity-assessed.

Cost: Conformity assessment $20,000-$80,000 per high-risk system.

NIST AI Risk Management Framework

Who it applies to: Federal contractors, increasingly required by private-sector procurement. Voluntary but functionally mandatory for many U.S. service businesses selling to enterprise.

What it covers: Four core functions — Govern, Map, Measure, Manage — across the AI lifecycle. Aligned with NIST cybersecurity frameworks for cross-compatibility.

AI-specific implications: The NIST AI RMF is the default reference U.S. enterprise procurement teams now use to evaluate AI vendor risk. Coverage of NIST AI RMF significantly reduces vendor security review cycle time.

The 9-Point AI Compliance Checklist

Below is the checklist we run before any AI workflow goes to production at KORIX. It synthesises the five frameworks above into the nine specific controls that, if implemented from Day 1, will pass roughly 90% of audit findings without remediation.

| # | Control | Frameworks satisfied | Day-1 design pattern |

|---|---|---|---|

| 1 | Audit trail for every AI decision | SOC 2, GDPR, HIPAA, EU AI Act | Append-only decision log w/ inputs, outputs, timestamps |

| 2 | Model version pinning + change log | SOC 2, EU AI Act, NIST AI RMF | Version-tagged model artifacts; rollback semantics |

| 3 | Role-based access control (RBAC) | SOC 2, HIPAA, GDPR | Least-privilege defaults; quarterly access reviews |

| 4 | Human approval checkpoints | EU AI Act (Art. 14), GDPR Art. 22 | Mandatory human review for high-impact decisions |

| 5 | Data minimisation + retention policies | GDPR, HIPAA | Defined retention schedule; auto-deletion workflows |

| 6 | Incident response runbook (AI-specific) | SOC 2, GDPR (72h), HIPAA | Drift detection, hallucination triage, breach notification |

| 7 | Vendor BAA / DPA agreements | HIPAA, GDPR, SOC 2 | Pre-signed agreements before any vendor touches data |

| 8 | Bias / fairness testing documentation | EU AI Act (high-risk), NIST AI RMF | Pre-deployment fairness assessment; documented results |

| 9 | Conformity assessment (EU AI Act high-risk) | EU AI Act | Pre-deployment third-party assessment for high-risk systems |

Andrew Ng, who has shipped more production AI than almost anyone alive, has been making this point publicly for years: AI governance is not a separate workstream — it is part of the AI engineering work itself, and the firms that treat it as such ship faster, not slower. Cassie Kozyrkov, former Chief Decision Scientist at Google, frames the buyer side: "The bottleneck is not the AI technology. The bottleneck is knowing which problem to give it." The same applies to compliance — the bottleneck isn't the regulation itself; it's knowing which controls actually map to your specific use case.

Five Compliance Questions to Ask Every AI Vendor

Before contracting with any AI vendor, walk through these five questions. Vendors who can answer concretely have shipped compliant AI before. Vendors who deflect on any one of them are accepting compliance risk on your behalf — risk you'll inherit when the auditor arrives.

- What's your current SOC 2 attestation status? Demand the report (or at minimum, the bridge letter). Type II observation period must cover the last 6-12 months. BCG's 2026 AI Value Capture research notes that vendor SOC 2 status is now the leading procurement-cycle blocker for enterprise AI deployments.

- Are you a HIPAA Business Associate? Will you sign a BAA? If your data ever includes PHI, no BAA = no engagement. Period. There is no in-between.

- What's your audit trail granularity? Can you produce, for a specific decision the AI made on a specific date, the input data, the model version, the output, and the user/system that triggered it? If the answer is anything less than "yes, here's an example," the audit trail does not exist.

- What's your incident response timeline for AI-specific failures? GDPR requires 72-hour breach notification. HIPAA requires similar. AI-specific failures (model drift, hallucination causing harm, training-data leak) must trigger the same response timeline.

- How do you handle EU AI Act high-risk classification? If your use case is high-risk under the Act and the vendor cannot speak to conformity assessment, they have not done the work. Atlassian's 2026 State of Product survey found 46% of teams cite integration as the biggest barrier to scaling AI — but compliance gaps are now the second-largest blocker for regulated industries.

Three Compliance Failures I've Seen

Pattern recognition from inheriting and rebuilding production AI systems. Names disguised; lessons exact.

Failure 1: The marketing automation that couldn't roll back

A B2B services firm wired their AI lead-scoring model directly into outbound email automation. The model misclassified a batch of prospects, and hundreds of badly-targeted outreach emails fired before anyone noticed. The platform had no rollback semantics for the agent's decisions and no audit trail granular enough to identify which model version produced which output. The team manually shut down the entire automation. The fix was rebuilding the system with proper governance — including pinned model versions and a mandatory review checkpoint before any external action — but that work cost roughly twice what designing it correctly from the start would have cost.

Lesson: Speed-to-deploy without rollback is debt that compounds the moment something goes wrong. Item #2 (model version pinning) and Item #6 (incident response) on the checklist would have prevented this.

Failure 2: The CX automation with no audit trail

A regulated financial services firm hired a tier-2 conversational AI specialist to deploy customer-support agents. The vendor's platform was excellent at conversation but had no built-in audit trail for compliance. Six months in, the firm's regulator asked for the decision-trace for a specific customer complaint — and the platform couldn't produce it. The fix was rebuilding around a compliance-first stack with audit trails on every agent decision, model version pinning, and human approval checkpoints. The cost was roughly twice what designing the system with compliance from Day 1 would have cost.

Lesson: Specialist platforms optimise for their speciality. If your industry is regulated, the audit trail is not "nice to have" — it's a Day-1 architecture decision the vendor must own contractually. Item #1 (audit trail) on the checklist would have caught this.

Failure 3: The "AI is internal-only, we don't need SOC 2"

A 60-person services firm deployed an internal AI tool — used only by their own team, not customer-facing. The CTO's view was "internal-only, no SOC 2 needed." Twelve months later, three of their largest customers requested SOC 2 attestation as part of vendor renewal. The firm's procurement teams discovered the internal AI was holding customer data via integration with their CRM, which made it in-scope. The remediation programme took eight months and roughly $90,000 to complete.

Lesson: "Internal-only" is rarely a stable scope statement for AI. If the AI ever touches customer data — even via existing CRM integrations — SOC 2 is in your future. Build for it from Day 1.

Want a Realistic Plan for Your Project?

No sales pitch. We will give you an honest read on what your situation actually needs, what it should cost, and whether AI is even the right tool here.

Book a Discovery Call →The Day-Zero Architecture Decisions

Three architecture decisions on Day 1 determine whether your AI deployment passes the first compliance audit or fails it. None of these are difficult to design in from the start. All of them are expensive to retrofit.

Decision 1 — Where does the audit log live? The audit log must be append-only, tamper-evident, and queryable by the specific dimensions auditors will ask about: timestamp, user, model version, input data, output, decision rationale. The mistake is treating the audit log as an afterthought logged into general application logs. Auditors don't accept "we have logs, we just need to grep them." They accept "here is the structured audit log with the specific decision-trace you asked for, returned in 30 seconds." Design the schema for that query pattern from Day 1.

Decision 2 — How are model versions pinned and rotated? When the AI's behaviour changes — and it will, because models update — you must be able to answer "which model version produced this output, and when did it deploy?" Most production teams treat the model as a SaaS-style auto-updating dependency. Compliance frameworks require treating it as a versioned artifact with explicit rollover semantics. Pin the model version in your deployment configuration; log the version with every decision; never auto-upgrade in production without explicit deployment.

Decision 3 — What's the human-in-the-loop checkpoint? EU AI Act Article 14 requires meaningful human oversight for high-risk systems. GDPR Article 22 limits solely-automated decision-making with significant effects. The architectural pattern that satisfies both is a contestability checkpoint: any AI decision that significantly affects an individual flows through a human reviewer who can confirm, modify, or reject the AI's output before action is taken. This is not the same as "humans review the dashboard quarterly." It's a per-decision review mechanism, designed into the workflow.

Where to Start (Honest)

If you are deploying your first production AI workload at a service business with 20-150 staff, the order of operations is: (1) classify your AI use case under the EU AI Act risk tiers; (2) confirm SOC 2 status with any vendor you're evaluating; (3) ensure HIPAA or sector-specific frameworks are met if applicable; (4) wire in the 9 controls from the checklist above as part of the architecture, not as phase-2; (5) document everything as you go.

A practical sequence we've found works for service businesses on this size band: in week 1, classify the use case and identify which of the five frameworks apply. In week 2, conduct the vendor review against the five compliance questions above. In week 3, design the architecture so all 9 controls are present from the first deployed line of code. By the time the system goes to production in week 4, the audit-readiness work has already happened — not because compliance was prioritised over engineering, but because the engineering and the compliance were the same workstream.

A common pushback we hear from operations leaders: "Our compliance team will review this when it's ready." That sequence is the most expensive sequence. By the time the compliance team reviews a deployed system, the architecture decisions that determine compliance posture have already been made — and reversing them costs 2-3× the original engineering budget. Bring the compliance lens into the architecture review on Day 1, not Day 90.

Two other practical observations. First, vendor compliance posture changes faster than vendor pricing — a vendor that wasn't SOC 2 attested 12 months ago may be now. Re-validate vendor compliance status at the start of every procurement cycle, not based on stale information. Second, the EU AI Act's high-risk requirements taking effect in August 2026 are the closest hard deadline most service businesses have for AI compliance work. If your AI use case falls under high-risk classification, the conformity assessment work needs to be substantially complete before that date — not started after it.

The single biggest predictor of compliance success is treating governance as part of the engineering work — not as a separate checkbox-driven exercise that happens after deployment. KORIX BYOS deployments are designed around this principle: governance is a Day-1 architecture decision, not a phase-2 add-on. Our broader treatment of Governed AI covers the design philosophy in depth.

For the cost-decision angle, our breakdown of what custom AI actually costs in 2026 walks through how compliance-from-Day-1 changes the budget. For vendor selection, our 8-criteria evaluation framework covers the contract terms that determine compliance ownership. For the wider question of consulting vs implementation, see our AI Consultancy vs AI Deployment Buyer's Guide.

KORIX defines AI compliance as the architecture decisions made on Day 1 that determine whether your first audit takes two weeks or six months. The 9-point checklist above is the operational expression of that definition. Build to it from the start, or rebuild later. There is no third option.

AI compliance is now five frameworks deep. Build governance from Day 1 — not after the audit notice arrives.

SOC 2 is the de facto B2B requirement; GDPR enforcement has tightened with AI-specific fines reaching €345M; HIPAA penalties run up to $1.5M per violation; the EU AI Act's high-risk system requirements take effect August 2026; and the NIST AI Risk Management Framework is now the default U.S. governance reference. Service businesses deploying AI in 2026 can no longer treat compliance as a phase-2 deliverable. The 9-point checklist in this article is the minimum bar — and it's designed into the architecture from the start, not bolted on after a regulator asks the first question.

Continue learning —

go deeper.

What AI compliance frameworks apply to service businesses in 2026?

Five frameworks dominate. SOC 2 (Type II) is the de facto B2B requirement — enterprise customers won't sign contracts without it. GDPR applies to any system processing EU resident data, with AI-specific enforcement intensifying since 2024. HIPAA applies to healthcare data, with penalties up to $1.5M per violation. The EU AI Act entered force on 1 August 2024 with high-risk system requirements taking effect in August 2026 — it applies globally to any AI serving EU users. The NIST AI Risk Management Framework is the default U.S. governance reference for federal contracts and increasingly for private-sector procurement. Industry-specific rules (PCI-DSS for payment data, FERPA for education, GLBA for financial services) add a sixth layer where applicable.

Do I need SOC 2 for an AI tool used internally?

If the AI tool only processes internal data and never connects to customer systems or customer data, SOC 2 is not legally required. But three things have changed in 2026: (1) most enterprise customers now require SOC 2 of their vendors regardless of internal/external scope; (2) cyber insurance underwriters increasingly demand SOC 2 attestation as a condition of coverage; (3) AI-specific governance gaps that SOC 2 controls catch (audit trails, access control, incident response) tend to surface as production failures even in internal-only systems. SOC 2 has become a quality bar, not just a contractual compliance item.

What does the EU AI Act require for service businesses in 2026?

The EU AI Act classifies AI systems into four risk tiers: prohibited, high-risk, limited-risk, and minimal-risk. Most service-business AI deployments fall under limited-risk (transparency obligations) or high-risk (full compliance regime). High-risk systems include AI used in employment decisions, credit scoring, healthcare diagnostics, education, and critical infrastructure — these require risk management systems, data governance, human oversight, accuracy testing, and conformity assessment. The high-risk requirements take effect 1 August 2026. If your AI serves any EU user (employees, customers, partners), the EU AI Act applies regardless of where your business is headquartered.

How much does AI compliance cost in 2026?

SOC 2 Type II audit: $30,000-$70,000 for a mid-market service business, plus 6-9 months of preparation effort and ongoing remediation. HIPAA compliance program: $20,000-$50,000 initial setup plus annual review. GDPR DPO + ongoing program: typically $50,000-$150,000/year for service businesses with EU exposure. EU AI Act conformity assessment for high-risk systems: $20,000-$80,000 per system depending on complexity. The combined first-year compliance cost for a mid-market service business deploying AI under all four frameworks typically lands between $120,000-$350,000. Building compliance into the AI architecture from Day 1 cuts this roughly in half versus retrofitting after the fact.

What's the biggest mistake service businesses make with AI compliance?

Treating compliance as a phase-2 deliverable. The single most expensive pattern I see is firms that ship AI systems without audit trails, then discover during their first compliance review that they cannot trace why the AI made a specific decision — and the only fix is rebuilding the system from the architecture up. Building audit trails, model version pinning, decision-trace logging, and human approval checkpoints is straightforward when designed in from Day 1. Bolting them on afterward typically costs 2-3× the original build budget. Our /learning-center/what-is-governed-ai breakdown covers this in depth.