A data‑intensive organisation relied heavily on visual inputs—images from operations, inspections, and user submissions—but lacked the ability to process them at scale. Manual review created delays, inconsistency, and operational blind spots.

Leadership needed a way to turn unstructured images into reliable, decision‑ready visual intelligence without losing accuracy, traceability, or control.

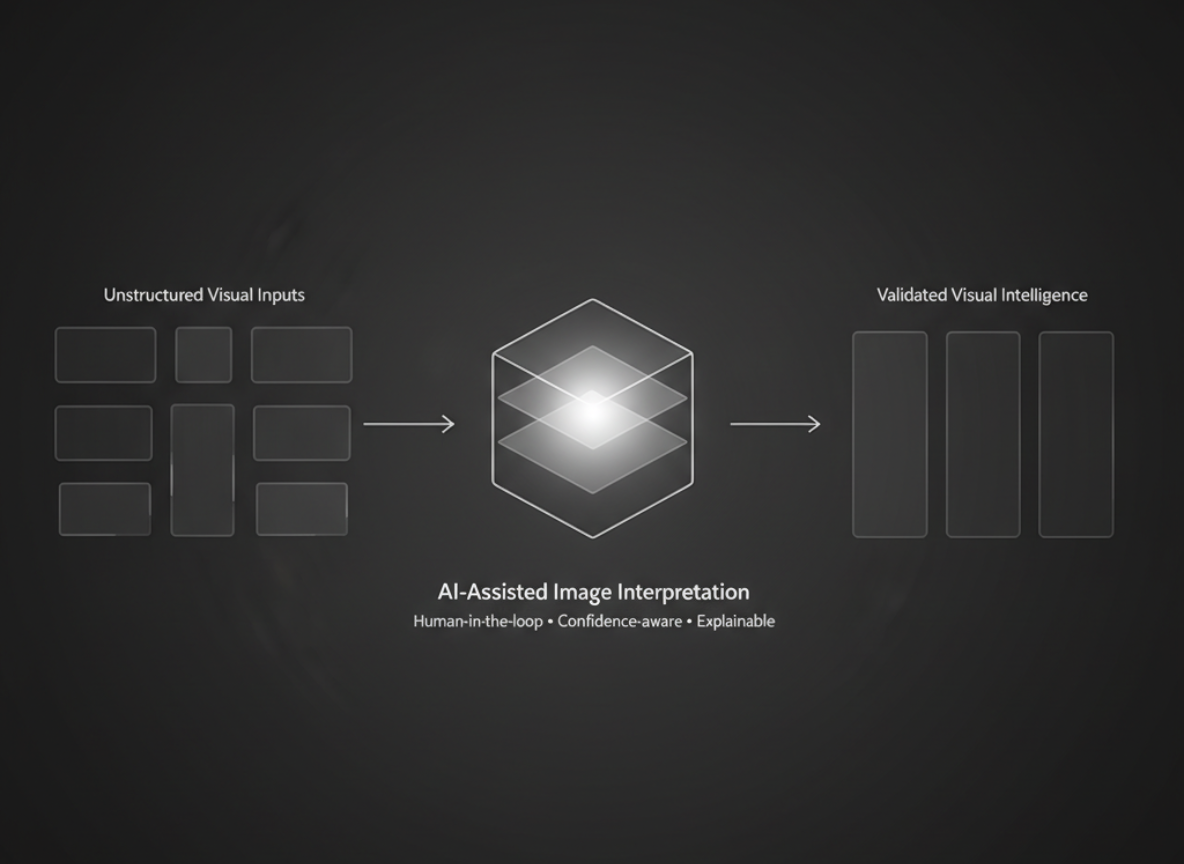

This work focused on AI‑driven visual intelligence and image processing to transform unstructured image data into structured, validated insights for enterprise teams.

Designed for operations, risk, and technology leaders in image‑heavy organisations in US, the UK, and other growing markets.

Challenges in Visual Intelligence & Image Processing

Manual image review at scale

Large volume of images required human inspection, leading to slow turnaround times, fatigue-driven errors, and limited throughput as demand increased.Manual image processing and visual inspection workflows could not scale reliably and produced inconsistent outcomes across teams.

02

Inconsistent interpretation of visual data

Different reviewers applied different judgement criteria, resulting in non-standardized outcomes and difficulty enforcing quality benchmark.

03

Lack of structured metadata

Images were stored as raw files without meaningful tagging or contextual enrichment, making search, audit, and downstream analysis difficult.

04

Risk of false positives and blind automation

Leadership was concerned that naive computer vision models could introduce silent erros without clear escalation or review mechanisms.

These challenges are common for teams that rely on images for day‑to‑day decisions but still treat them as raw files rather than structured visual data.

Have image-heavy workflows and want to see what AI-driven visual intelligence could look like in your context?

Talk to an AI systems expert

Talk to an AI systems expert

AI-Driven Approach to Visual Intelligence

Problem-first computer vision design

We began by mapping where visual interpretation actually influenced business decisions, separating signal-critical use cases from low-value automation opportunities.

02

Human-in-the-loop model architecture

AI models were designed to assist and prioritise human review, not replace it—flagging anomalies, confidence scores, and edge cases explicitly. Computer vision models were applied selectively to support human judgement while remaining explainable, auditable, and confidence-aware.

03

Standardised visual intelligence pipeline

Image ingestion, preprocessing, classification, and review workflows were modularised, creating consistency across teams and use cases.

04

Explainability and auditability by design

Every AI output was paired with confidence indicators, visual highlights and traceable decision logs to support governance and compliance.

This approach made visual interpretation a governed, explainable system—not a collection of isolated computer vision experiments.

Outcomes of AI-Driven Visual Intelligence

Significant reduction in manual workload

AI pre-classification reduced the volume of images requiring full human review, accelerating operational throughput.

02

Improved consistency in visual decisions

Standardised AI‑assisted interpretation reduced subjective variation across teams and locations.

03

Actionable visual data at scale

Previously unstructured image repositories became searchable, analysable, and decision-ready assets. Visual data was converted into structured, validated intelligence that could be trusted and reused across enterprise decision-making workflows.

04

Future-proof visual intelligence foundation

The system was designed to support new vision models and use cases without disrupting existing workflows.

Together, these outcomes turned unstructured image repositories into a reliable visual intelligence layer that could support growth across teams and regions.

What These Engagements Share

Clear system boundaries before execution

Systems were defined upfront—what visual inputs they handle, what they ignore, and who owns each part—before any AI automation was deployed.

02

Trade-offs made explicit rather than implicit

Decisions about speed, accuracy, effort, and risk were documented clearly so teams understood what they were gaining and what they were giving up.

03

Documentation that supported future iteration

The visual intelligence pipeline, assumptions, and edge cases were documented as a living system so future teams could extend it without reverse‑engineering everything.

04

Emphasis on long-term sustainability over short-term gains

Architecture, models, and workflows were designed to support new vision use cases, not just the first pilot or demo.

This consistency is deliberate: it lets teams grow visual intelligence capabilities without re-solving the same structural problems in every new project.

How We Define Success

Remain reliable under increasing image volume

The system keeps performing as image volumes, use cases, and edge cases grow—without constant firefighting or brittle patches.

02

Support better visual decision-making

Outputs are trustworthy, explainable, and timely so reviewers and managers can make stronger decisions with less manual checking.

03

Reduce operational complexity

Visual review becomes simpler to run, monitor, and hand over, rather than adding hidden steps and fragile manual workflows.

04

Scale without rework, aligned with business objectives

New computer vision models and use cases can be added on top of the existing pipeline without throwing away core components or drifting from business goals.

These factors decide whether the visual intelligence system stays useful long after the initial delivery.

Tech Stack

Backend

Python (FastAPI) services to orchestrate image ingestion, preprocessing, and review workflows

02

Computer vision

Modern cloud vision APIs and custom models for classification, object detection, and visual feature extraction

03

AI & decision layer

Transformer‑based models and rules/thresholds to combine AI signals with human judgement and escalation paths

04

Data & storage

Object storage for raw and processed images, PostgreSQL for structured visual intelligence and audit logs

04

Integrations

REST/JSON APIs to connect visual intelligence outputs into existing operational systems and dashboards

05

Infrastructure & operations

Containerised services (Docker) running on major cloud providers with monitoring, logging, and alerting for production use

06

Quality & testing

Automated tests for core services and validation logic, plus monitoring to catch anomalies and regression issues early

Together, this stack turns unstructured image streams into a goverened visual intelligence layer that can support new models and use cases without redesigning the core system.